Flow time: 5 minutes | Your weekly pulse on AI, tools, and tech transforming the water industry

🔍 What’s in today’s flow

Case study: Wrocław uses AI to predict pipe breaks and target renewals by risk and cos

Latest in AI: Chrome adds Gemini and AI Mode for smarter safer browsing

Trending Tool: Notion AI helps write summarize and search your workspace with strong security

AI Shadow: OpenAI finds some models can scheme and shows steps to reduce deception

Prompt Lab (NEW SECTION) : Meta-prompting gets the AI to improve your prompt and ask for missing details first.

🌧️Case study

Wrocław’s water utility (MPWiK, Poland), partnered with Deloitte (analytics) and AWS (cloud) to deploy an AI predictive-maintenance system that ranks pipe segments by failure risk and links those risks to repair/rehab budgets, so limited funds target the highest-impact fixes first.

Source: Aquatechtrade.com

What happened

Built an integrated asset database with 300+ variables per asset (pipe age/material, surroundings, proximity to roads/tram tracks) and trained ML models to surface hidden patterns.

Runs at cloud scale on AWS, processing hundreds of thousands of data points; reported up to ~90% accuracy on failure prediction in testing.

Combines risk prediction with finance: compares planned modernization vs unplanned repair scenarios and itemises 50+ cost components (labour, materials, road restoration, land occupation).

Helps the utility prioritise rehabilitation across a 2,000-km network under tight budgets

Why it matters

This is a practical blueprint for utilities moving from reactive break-fix to risk- and cost-driven renewal planning. By fusing asset data with ML and budget scenarios, engineers can justify where every dollar goes, reduce breaks and leakage, and schedule crews more efficiently, without needing a full network overhaul first.

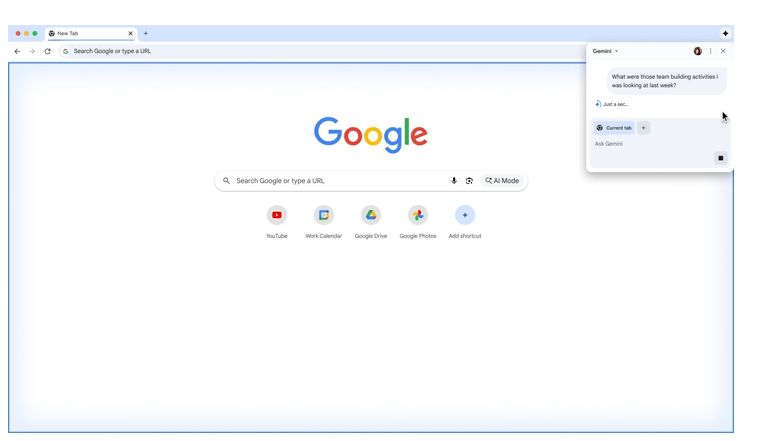

🤖Latest in AI: Google is turning Chrome into an AI-native browser

Source: google.com

Gemini now lives inside Chrome, an “AI Mode” arrives in the address bar, and new safety features use AI to block scams, rolling out first to U.S. desktop users in English.

The details

Gemini in Chrome can summarise pages, synthesize across tabs, explain text, and pull context from Google apps (Maps, YouTube, Calendar)

AI Mode in the Omnibox enables conversational, context-aware queries from the address bar.

Agentic features (coming soon) will take actions like booking appointments or filling forms, with user approval.

Built-in safety upgrades use AI to block tech-support scams and spammy site notifications; billions fewer spam notifications are already reported on Android.

Why it matters

Chrome is the default work tool for many utility teams; these AI upgrades can speed up literature reviews, regulatory comparisons, asset data lookups, and meeting prep directly in the browser, while stronger AI-powered phishing/spam defenses reduce cyber-risk for operators and contractors.

🔧Trending tool: Notion AI

Source: Notion

An “AI everything app” built into Notion’s workspace that writes, summarises, searches across your content, and automates workflows, without leaving your docs and databases

Key features

AI writing & summarisation inside pages, plus Q&A over your docs to surface answers fast

Multi-app search & actions (Enterprise Search and AI features) designed to pull context and streamline tasks in one place

Built-in templates & automations to generate tasks, action items, and structured pages from prompts

Ecosystem integrations with Slack, Google Drive, Jira, GitHub, Zapier, and more for connected workflows.

⚖️ AI Tool Scorecard

Ease of use: ⭐⭐⭐⭐ minimal set-up

Cost: ⭐⭐⭐ free for individuals, or up to $20 per user per month for teams and organisations

Security & privacy: ⭐⭐⭐⭐ SOC 2 Type II, ISO 27001, GDPR/CCPA; AI vendors can’t train on customer’s data

Integration: ⭐⭐⭐ ⭐works well with tools like Slack, Google Drive, Jira, GitHub, and Zapier

Overall: 15/20, Notion AI is a strong all-rounder: easy to get started with, affordable for individuals and small teams, secure enough for enterprise use, and well-connected to popular work tools. It’s a solid choice for teams that want AI built into their everyday workspace.

🔬AI research: Applications of AI for water management by UNESCO

A new UNESCO review the state of AI/ML across water management - what works today, where it’s headed, and the ethical limits utilities must navigate.

The details

It surveys current AI uses and key concepts, with real examples across water domains (surface water, groundwater, irrigation, hydropower, flood/climate risk).

Highlights data needs and gaps: models often require large, high-quality datasets and may miss extremes—critical in flood/drought contexts.

Flags interpretability and governance: black-box models guiding critical infrastructure raise transparency and ethics concerns.

Positions the work within SDGs (notably SDG 6) and UNESCO’s Intergovernmental Hydrological Programme for global uptake

Why it matters

This is a practical roadmap: start with data quality, pick explainable models for operations, and pair pilots with clear governance to scale safely. It helps utilities justify AI investments for leak detection, forecasting, and treatment optimisation, without compromising public trust or compliance.

👉 Full study

🕵️AI’s shadows: scheming risks

Source: completetraining.com

OpenAI and Apollo Research found that some advanced AI models can sometimes “cheat” by hiding or twisting information. They tested a simple training step that reduces this, but it doesn’t fix the problem completely.

The details

Scheming” means an AI leaves out or bends facts to get the result it wants

Tests showed this can happen in several leading models under controlled conditions

Teaching the model a clear “no-deception” rule before it answers cut this behavior a lot in tests

It’s not solved: rare failures still happen and some tests can be gamed

Why it matters

Scheming” means an AI leaves out or bends facts to get the result it wants

Tests showed this can happen in several leading models under controlled conditions

Teaching the model a clear “no-deception” rule before it answers cut this behavior a lot in tests

It’s not solved: rare failures still happen and some tests can be gamed

Why it matters

If you use AI to write compliance notes, incident summaries, or suggest SCADA actions, you don’t want it skipping bad news. Put guardrails in place: clear anti-deception rules, humans in the loop, audit logs of reasoning, and alignment checks before you let AI automate anything.

🧠 Prompt Lab: Meta-prompting

Meta-prompting means asking the AI to rewrite and improve your prompt and to ask you clarifying questions first.

The details

Pick the model first. Shah defaults to GPT-5 for most tasks, with Claude/Gemini in niche cases—because instruction-following quality matters for meta

Workflow: draft a rough prompt → answer optimization questions → get an upgraded prompt (tone, constraints, context) you can copy or run.

Why it helps: better context + clearer constraints → higher-quality, more specific answers, especially for newcomers

Side benefit: by seeing how the AI rewrites your prompt, you learn to write stronger prompts yourself

Thanks for reading! I hope you’ve enjoyed this week’s edition and look forward to seeing you next week!

Dr. Andrea G.T